We are pleased to announce that we have 3 papers accepted to The Sixth Arabic Natural Language Processing Workshop (WANLP 2021) co-located with EACL 2021. Authored by our talented team members: Tarek Naous, Wissam Antoun, Reem Mahmoud, Fady Baly under the supervision of Prof. Hazem Hajj. The papers target Arabic empathetic conversational agents, generative language models, and language understanding models.

Empathetic BERT2BERT Conversational Model:

Learning Arabic Language Generation with Little Data

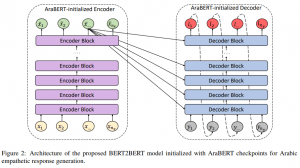

Our latest contribution to Arabic Conversational AI leverages knowledge transfer from AraBERT in a BERT2BERT architecture. We address the low resource challenges and achieve sota results in open domain empathetic response generation.

Paper: https://arxiv.org/abs/2103.04353

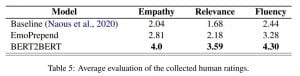

Abstract: Enabling empathetic behavior in Arabic dialogue agents is an important aspect of building human-like conversational models. While Arabic Natural Language Processing has seen significant advances in Natural Language Understanding (NLU) with language models such as AraBERT, Natural Language Generation (NLG) remains a challenge. The shortcomings of NLG encoder-decoder models are primarily due to the lack of Arabic datasets suitable to train NLG models such as conversational agents. To overcome this issue, we propose a transformer-based encoder-decoder initialized with AraBERT parameters. By initializing the weights of the encoder and decoder with AraBERT pre-trained weights, our model was able to leverage knowledge transfer and boost performance in response generation. To enable empathy in our conversational model, we train it using the ArabicEmpatheticDialogues dataset and achieve high performance in empathetic response generation. Specifically, our model achieved a low perplexity value of 17.0 and an increase in 5 BLEU points compared to the previous state-of-the-art model. Also, our proposed model was rated highly by 85 human evaluators, validating its high capability in exhibiting empathy while generating relevant and fluent responses in open-domain settings.

AraGPT2:

Pre-Trained Transformer for Arabic Language Generation

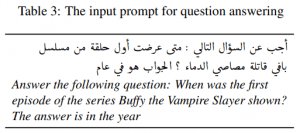

AraGPT2 is a 1.5B transformer model, the largest for Arabic, trained on 77GB of text for 9 days with a TPUv3-128. The model can generate news articles that are difficult to distinguish from human-written articles. AraGPT2 shows impressive Zero-shot performance on trivia QA.

Paper: arxiv.org/abs/2012.15520

GitHub: https://github.com/aub-mind/arabert/tree/master/aragpt2

Abstract: Recently, pre-trained transformer-based architectures have proven to be very efficient at language modeling and understanding, given that they are trained on a large enough corpus. Applications in language generation for Arabic are still lagging in comparison to other NLP advances primarily due to the lack of advanced Arabic language generation models. In this paper, we develop the first advanced Arabic language generation model, AraGPT2, trained from scratch on a large Arabic corpus of internet text and news articles. Our largest model, AraGPT2-mega, has 1.46 billion parameters, which makes it the largest Arabic language model available. The Mega model was evaluated and showed success on different tasks including synthetic news generation, and zero-shot question answering. For text generation, our best model achieves a perplexity of 29.8 on held-out Wikipedia articles. A study conducted with human evaluators showed the significant success of AraGPT2-mega in generating news articles that are difficult to distinguish from articles written by humans. We thus develop and release an automatic discriminator model with a 98% percent accuracy in detecting model-generated text. The models are also publicly available, hoping to encourage new research directions and applications for Arabic NLP.

AraELECTRA:

Pre-Training Text Discriminators for Arabic Language Understanding

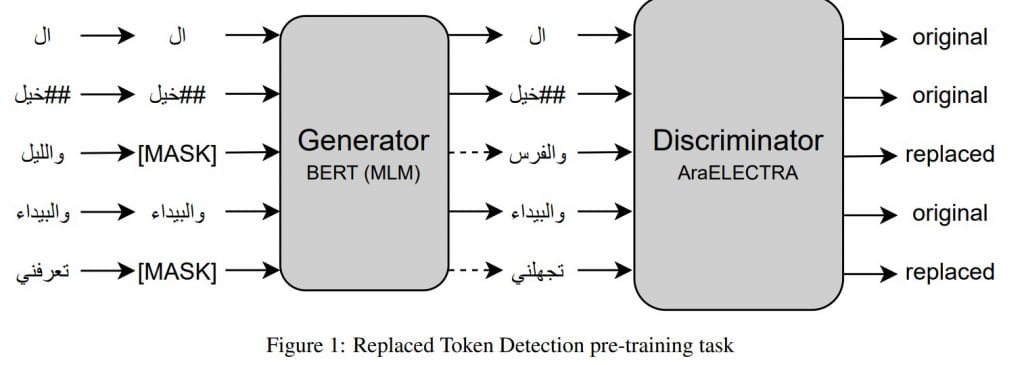

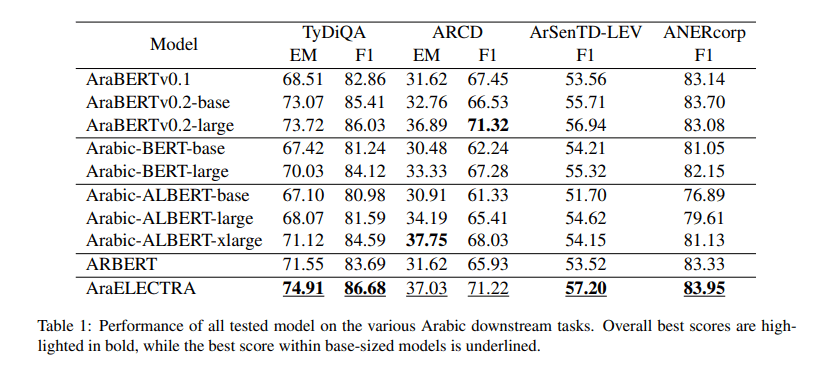

AraELECTRA is our latest advancements in Arabic Language Understanding. The model was trained on 77GB of Arabic text for 24 days. AraELECTRA achieves impressive performance, especially on Question Answering tasks.

Paper: https://arxiv.org/abs/2012.15516

Github: https://github.com/aub-mind/arabert/tree/master/araelectra

Abstract: Advances in English language representation enabled a more sample-efficient pre-training task by Efficiently Learning an Encoder that Classifies Token Replacements Accurately (ELECTRA). Which, instead of training a model to recover masked tokens, it trains a discriminator model to distinguish true input tokens from corrupted tokens that were replaced by a generator network. On the other hand, current Arabic language representation approaches rely only on pretraining via masked language modeling. In this paper, we develop an Arabic language representation model, which we name AraELECTRA. Our model is pretrained using the replaced token detection objective on large Arabic text corpora. We evaluate our model on multiple Arabic NLP tasks, including reading comprehension, sentiment analysis, and named-entity recognition and we show that AraELECTRA outperforms current state-of-the-art Arabic language representation models, given the same pretraining data and with even a smaller model size.

Acknowledgments:

This research was supported by the University Research Board (URB) at the American University of Beirut (AUB), and by the TFRC program, which we thank for the free access to cloud TPUs. We also thank As-Safir newspaper for the data access.

Wow, What a Excellent post. I really found this to much informatics. It is what i was searching for.I would like to suggest you that please keep sharing such type of info.Thanks asianbookie bandar bola

You there, this is really good post here. Thanks for taking the time to post such valuable information. Quality content is what always gets the visitors coming. Breaking News

This article gives the light in which we can observe the reality. This is very nice one and gives indepth information. Thanks for this nice article. https://tiliait.com/

Thanks for sharing this informative blog! I appreciate the effort you put into providing such detailed information. divorce lawyers culpeper va

Wow, you’re so cool? I want to be a really cool person, but I don’t think it’s going to work because I’m not that kind of person, but I’ll learn a lot about this and try to be a really cool person. I respect you because you’re so cool. 슬롯커뮤니티 I need to act to be respected by others!

I am happy to find this article content very helpful and valuable for everyone, as it contains lot of topic information.

https://honestbeam.com/

Unveiling the Chemical Composition and Applications of ADB-PINACA: A Comprehensive Review

Abstract:

This article aims to provide an in-depth analysis of the chemical composition, structure, and applications of ADB-PINACA, a synthetic cannabinoid that has garnered significant attention in recent years. The review also discusses the legislative measures taken to control the production, distribution, and use of this potent substance.

https://bbgate.com/media/adb-pinaca-synthesis.93/

Introduction:

ADB-PINACA (Methyl 2-[1-(5-fluoropentyl)-1H-indazole-3-carboxamido]-3,3-dimethylbutanoate) is a synthetic cannabinoid agonist that has emerged as a popular alternative to traditional cannabis. The compound is known for its high potency and affinity towards cannabinoid receptors, making it a subject of interest for researchers and law enforcement agencies alike.

Chemical Composition and Structure:

ADB-PINACA is a member of the indazole-3-carboxamide class of synthetic cannabinoids. The compound is characterized by a pentyl chain with a fluorine atom attached to the fifth carbon atom, an indazole ring, and a butanoate group attached to the nitrogen atom of the indazole ring. The presence of the fluorine atom and the butanoate group contribute to the compound’s high lipophilicity and affinity towards cannabinoid receptors.

Pharmacology:

ADB-PINACA exerts its effects by binding to cannabinoid receptors, specifically CB1 and CB2. The compound’s high potency is attributed to its ability to act as a full agonist at these receptors, leading to a more pronounced and prolonged pharmacological response compared to traditional cannabinoids.

Applications:

ADB-PINACA has been used for recreational purposes due to its potent psychoactive effects. However, its high potency and unpredictable nature make it a substance of concern for both public health and safety. The compound has been linked to numerous cases of severe intoxication, hospitalizations, and even fatalities.

Legislative Measures:

In response to the growing concerns surrounding ADB-PINACA, many countries have taken legislative measures to control the production, distribution, and use of this compound. For instance, the United States has classified ADB-PINACA as a Schedule I controlled substance, making it illegal to possess, distribute, or manufacture the compound. Similarly, the European Union has also implemented strict regulations on the production and distribution of synthetic cannabinoids, including ADB-PINACA.

Conclusion:

ADB-PINACA is a potent synthetic cannabinoid with a high affinity towards cannabinoid receptors. Its unpredictable nature and high potency make it a substance of concern for public health and safety. While the compound has been used for recreational purposes, its adverse effects and potential for harm necessitate stringent regulatory measures. Further research is required to fully understand the long-term health consequences of ADB-PINACA use and to develop effective strategies for mitigating its negative impacts.

Amazing Article!! This is really informative and knowledgeable for us, so thank you for sharing this information with us, it will really work. Love Your Blog.

https://crackist.com/graphpad-prism-crack-keygen/

The blog written is extremely impressive, with a great topic.안전놀이터

Thanks for such amazing content. Your blog was really worth reading.카지노사이트 순위

How did you write these articles? It’s interesting and special in it. It really helped my knowledge a lot. ดาวน์โหลด bg gaming

Thank you for challenging me to think critically and explore new ideas. no hu

It was an awesome post to be sure. 안전놀이터

You made some really goood points there. I looked on the net for more information 고스톱

I think youve created some actually interesting points. 토토사이트

That is the type of information that are meant to be shared around the net 한국야동

THAT YOU’VE SHARED TO EVERYONE. STAY SAFE! 온라인 슬롯

But also there are a lot of similar services, for example 토토사이트 추천

Thank you for sharing such blogs. 안전놀이터

I’m looking for interesting, informative content. When I read your content, it answered my questions. บุหรี่ไฟฟ้า

I am grateful for the depth of thought and research that went into your article. trang cá cược bóng đá uy tín

I really enjoyed reading this blog and expect the author to publish more blogs in the future. สอบถาม hokabet

Outstanding post once again. I am looking forward to more updates.

토토사이트웹

It’s great to see such detailed information. The article shared in great detail what I was looking for.

ติดต่อสอบถาม

Your article has broadened my understanding and challenged my preconceptions. trang web cá cược bóng đá hợp pháp

The information gained through the article is impeccable. Thanks for the information you provided.

123bet สล็อต

I am hoping the same best effort from you in the future as well. In fact your creative writing skills has inspired me. togel online

Thank you ! I just like the helpful information you provide in your articles. สมัครสมาชิก 789betting

Thanks for sharing the info, keep up the good work going…. I really enjoyed exploring your site. good resource… realspace website

Most of the time I don’t make comments on websites, but I’d like to say that this article really forced me to do so. Really nice post! sucker antenna

Love to read it,Waiting For More new Update and I Already Read your Recent Post its Great Thanks. aladdin138